| (13 intermediate revisions by 5 users not shown) | |||

| Line 1: | Line 1: | ||

| − | [ | + | <center><font size= 4> |

| + | '''[[ECE662]]: Statistical Pattern Recognition and Decision Making Processes''' | ||

| + | </font size> | ||

| − | [ | + | Spring 2008, [[user:mboutin|Prof. Boutin]] |

| + | [[Slectures|Slecture]] | ||

| + | |||

| + | <font size= 3> Collectively created by the students in [[ECE662:BoutinSpring08_OldKiwi|the class]]</font size> | ||

| + | </center> | ||

| + | |||

| + | ---- | ||

| + | =Lecture 15 Lecture notes= | ||

| + | Jump to: [[ECE662_Pattern_Recognition_Decision_Making_Processes_Spring2008_sLecture_collective|Outline]]| | ||

| + | [[Lecture 1 - Introduction_OldKiwi|1]]| | ||

| + | [[Lecture 2 - Decision Hypersurfaces_OldKiwi|2]]| | ||

| + | [[Lecture 3 - Bayes classification_OldKiwi|3]]| | ||

| + | [[Lecture 4 - Bayes Classification_OldKiwi|4]]| | ||

| + | [[Lecture 5 - Discriminant Functions_OldKiwi|5]]| | ||

| + | [[Lecture 6 - Discriminant Functions_OldKiwi|6]]| | ||

| + | [[Lecture 7 - MLE and BPE_OldKiwi|7]]| | ||

| + | [[Lecture 8 - MLE, BPE and Linear Discriminant Functions_OldKiwi|8]]| | ||

| + | [[Lecture 9 - Linear Discriminant Functions_OldKiwi|9]]| | ||

| + | [[Lecture 10 - Batch Perceptron and Fisher Linear Discriminant_OldKiwi|10]]| | ||

| + | [[Lecture 11 - Fischer's Linear Discriminant again_OldKiwi|11]]| | ||

| + | [[Lecture 12 - Support Vector Machine and Quadratic Optimization Problem_OldKiwi|12]]| | ||

| + | [[Lecture 13 - Kernel function for SVMs and ANNs introduction_OldKiwi|13]]| | ||

| + | [[Lecture 14 - ANNs, Non-parametric Density Estimation (Parzen Window)_OldKiwi|14]]| | ||

| + | [[Lecture 15 - Parzen Window Method_OldKiwi|15]]| | ||

| + | [[Lecture 16 - Parzen Window Method and K-nearest Neighbor Density Estimate_OldKiwi|16]]| | ||

| + | [[Lecture 17 - Nearest Neighbors Clarification Rule and Metrics_OldKiwi|17]]| | ||

| + | [[Lecture 18 - Nearest Neighbors Clarification Rule and Metrics(Continued)_OldKiwi|18]]| | ||

| + | [[Lecture 19 - Nearest Neighbor Error Rates_OldKiwi|19]]| | ||

| + | [[Lecture 20 - Density Estimation using Series Expansion and Decision Trees_OldKiwi|20]]| | ||

| + | [[Lecture 21 - Decision Trees(Continued)_OldKiwi|21]]| | ||

| + | [[Lecture 22 - Decision Trees and Clustering_OldKiwi|22]]| | ||

| + | [[Lecture 23 - Spanning Trees_OldKiwi|23]]| | ||

| + | [[Lecture 24 - Clustering and Hierarchical Clustering_OldKiwi|24]]| | ||

| + | [[Lecture 25 - Clustering Algorithms_OldKiwi|25]]| | ||

| + | [[Lecture 26 - Statistical Clustering Methods_OldKiwi|26]]| | ||

| + | [[Lecture 27 - Clustering by finding valleys of densities_OldKiwi|27]]| | ||

| + | [[Lecture 28 - Final lecture_OldKiwi|28]] | ||

| + | ---- | ||

| + | ---- | ||

Figure 1 | Figure 1 | ||

| Line 10: | Line 50: | ||

[[Image:Lec15_comparison_OldKiwi.PNG]] | [[Image:Lec15_comparison_OldKiwi.PNG]] | ||

| + | |||

| + | Parzen Window Method | ||

| + | |||

| + | **Step 1:** Choose "shape" of your window by introducing a "window function" | ||

| + | |||

| + | e.g. if <math>R_i</math> is hybercube in <math>\mathbb{R}^n</math> with side-length <math>h_i</math>, then the window function is <math>\varphi</math>. | ||

| + | |||

| + | <math>\varphi(\vec{u})=\varphi(u_1, u_2, \ldots, u_n)=1</math> if <math>|u_i|<\frac{1}{2}, \forall i</math> otherwise 0. | ||

| + | |||

| + | Examples of Parzen windows | ||

| + | |||

| + | |||

| + | |||

| + | [[Image:Lec15_square_OldKiwi.jpg]] | ||

| + | |||

| + | [[Image:Lec15_square3D_OldKiwi.jpg]] | ||

| + | |||

| + | Given the shape for parzen window by <math>\varphi</math>, we can scale and shift it as required by the method. | ||

| + | |||

| + | <math>\varphi(\frac{\vec{x}-\vec{x_0}}{h_i})</math> is window centered at <math>\vec{x_0}</math> scaled by a factor <math>h_i</math>, i.e. its side-length is <math>h_i</math>. | ||

| + | |||

| + | [[Image:Lec15_shiftWindow_OldKiwi.jpg]] | ||

| + | |||

| + | **Step 2:** Write the density estimate of <math>p(\vec{x})</math> at <math>\vec{x_0} \in R_i</math> using window function, denoted by <math>p_i(\vec{x_0})</math>. | ||

| + | |||

| + | We have number of samples for <math>\{\vec{x_1}, \vec{x_2}, \ldots, \vec{x_i}\}</math> inside <math>R_i</math> denoted by <math>K_i</math> | ||

| + | |||

| + | <math>\sum_{l=1}^{i}\varphi(\frac{\vec{x_l}-\vec{x_0}}{h_i})</math> | ||

| + | |||

| + | So, <math>p_i(\vec{x_0})=\frac{k_i}{iV_i}=\frac{1}{iV_i}\sum_{l=1}^{i}\varphi(\frac{\vec{x_l}-\vec{x_0}}{h_i})</math> | ||

| + | |||

| + | Let <math>\delta_i(\vec{u})=\frac{1}{V_i}\varphi(\frac{\vec{u}}{h_i})</math> | ||

| + | |||

| + | <math>p_i(\vec{x_0})=\frac{1}{i}\sum_{l=1}^{i}\delta_i(\vec{x_l}-\vec{x_0})</math> | ||

| + | |||

| + | This last equation is an average over impulses. For any l, <math>\lim_{h_i->0}\delta(\vec{x_l}-\vec{x_0})</math> is [Dirac delta Function]. We do not want to average over dirac delta functions. Our objective is that <math>p_i(\vec{x_0})</math> should converge to true value <math>p(\vec{x})</math>, as <math>i\rightarrow \infty</math> | ||

| + | |||

| + | [[Image:Lec15_dirac_OldKiwi.jpg]] | ||

| + | |||

| + | **What does convergence mean here?** | ||

| + | Observe <math>\{p_i(\vec{x_0})\}</math> is a sequence of random variables since <math>p_i(\vec{x_0})</math> depends on random variables |sample_space_i|. | ||

| + | What do we mean by convergence of a sequence of random variables (There are many definitions). We pick "Convergence in mean square" sense, i.e. | ||

| + | |||

| + | If <math>\lim_{i\rightarrow \infty}E\{p_i(\vec{x_0})\}=p(\vec{x_0})</math> | ||

| + | |||

| + | and <math>\lim_{i\rightarrow \infty}Var\{p_i(\vec{x_0})\}=0</math> | ||

| + | |||

| + | then we say <math>p_i(\vec{x_0}) \longrightarrow p(\vec{x_0})</math> in mean square as <math>i\rightarrow \infty</math> | ||

| + | |||

| + | **First condition:** | ||

| + | From the previous result, |jinha_pix0| | ||

| + | |||

| + | |||

| + | <math>\displaystyle p_i (x_0) = \frac{1}{i} \sum_{l=1}^{i} \delta_i (\vec{x}_l - \vec{x}_0)</math> | ||

| + | |||

| + | We don't need an infinity number of samples to make <math>E(p_i(\vec{x_o}))</math> converge to <math>p(\vec{x_o})</math> as <math>i\to\infty</math>. | ||

| + | |||

| + | We just need <math>h_i \to\infty</math> (iie. <math>V_i\to\infty</math>) | ||

| + | |||

| + | **To make it sure** |jinha_varpix0|, what should we do? | ||

| + | <math>Var(p_i(x_0)) \rightarrow 0</math> | ||

| + | |||

| + | |||

| + | <math>\displaystyle Var(p_i(x_0)) = Var(\sum_{l=1}^{i} \frac{1}{i} \delta_i(\vec{x}_l - \vec{x}_0)) = \sum_{l=1}^{i} Var(\frac{1}{i} \delta_i(\vec{x}_l - \vec{x}_0))</math> | ||

| + | |||

| + | <math>\displaystyle = \sum_{l=1}^{i} E \left[ \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} - E\left[ \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} \right] \right)^2 \right] = \sum_{l=1}^{i} E \left[ \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} \right)^2 \right] - \left( E\left[ \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} \right] \right)^2</math> | ||

| + | |||

| + | We know that second term is non-negative, therefore we can write | ||

| + | |||

| + | <math>\displaystyle Var(p_i(x_0)) \le \sum_{l=1}^{i} E \left[ \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} \right)^2 \right]</math> | ||

| + | |||

| + | |jinha_varpix0_4| | ||

| + | |||

| + | <math>\displaystyle \rightarrow Var(p_i(x_0)) \le \sum_{l=1}^{i} \int \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} \right)^2 p(x_l) dx_l</math> | ||

| + | |||

| + | <math>\displaystyle \rightarrow Var(p_i(x_0)) \le \sum_{l=1}^{i} \frac{1}{i^2} \int \frac{\psi \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i}\right)}{V_i} \frac{\psi \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i}\right)}{V_i} p(x_l) dx_l</math> | ||

| + | |||

| + | <math>\displaystyle \rightarrow Var(p_i(x_0)) \le \frac{1}{i V_i} sup\psi \int \sum_{l=1}^{i} \delta_i (x_l - x_0) p(x_l) dx_l</math> | ||

| + | |||

| + | <math>\displaystyle \therefore Var(p_i(x_0)) \le \frac{1}{i V_i} sup\psi E [p_i(x_0)]</math> | ||

| + | |||

| + | |||

| + | If fixed i=d, then as <math>v_i</math> increased, <math>var(P_i (\vec{x_0}))</math> decreased. | ||

| + | |||

| + | But, if <math>i V_i \rightarrow \infty</math> , as <math>i \rightarrow \infty</math> | ||

| + | |||

| + | (for example, if <math>v_i= \frac{1}{\sqrt i}, v_i=\frac{13}{\sqrt i} or \frac{17}{\sqrt i}</math>) | ||

| + | |||

| + | then, <math>var(P_i (\vec{x_0})) \rightarrow 0, as i \rightarrow \infty</math> | ||

| + | |||

| + | Here are some useful links to "Parzen-window Density Estimation" | ||

| + | |||

| + | http://www.cs.utah.edu/~suyash/Dissertation_html/node11.html | ||

| + | |||

| + | http://en.wikipedia.org/wiki/Parzen_window | ||

| + | |||

| + | http://www.personal.rdg.ac.uk/~sis01xh/teaching/CY2D2/Pattern2.pdf | ||

| + | |||

| + | http://www.eee.metu.edu.tr/~alatan/Courses/Demo/AppletParzen.html | ||

| + | ---- | ||

| + | Previous: [[Lecture_14_-_ANNs%2C_Non-parametric_Density_Estimation_(Parzen_Window)_OldKiwi|Lecture 14]] | ||

| + | Next: [[Lecture_16_-_Parzen_Window_Method_and_K-nearest_Neighbor_Density_Estimate_OldKiwi|Lecture 16]] | ||

| + | |||

| + | [[ECE662:BoutinSpring08_OldKiwi|Back to ECE662 Spring 2008 Prof. Boutin]] | ||

| + | [[Category:ECE662]] | ||

| + | [[Category:decision theory]] | ||

| + | [[Category:lecture notes]] | ||

| + | [[Category:pattern recognition]] | ||

| + | [[Category:slecture]] | ||

Latest revision as of 11:21, 10 June 2013

ECE662: Statistical Pattern Recognition and Decision Making Processes

Spring 2008, Prof. Boutin

Collectively created by the students in the class

Lecture 15 Lecture notes

Jump to: Outline| 1| 2| 3| 4| 5| 6| 7| 8| 9| 10| 11| 12| 13| 14| 15| 16| 17| 18| 19| 20| 21| 22| 23| 24| 25| 26| 27| 28

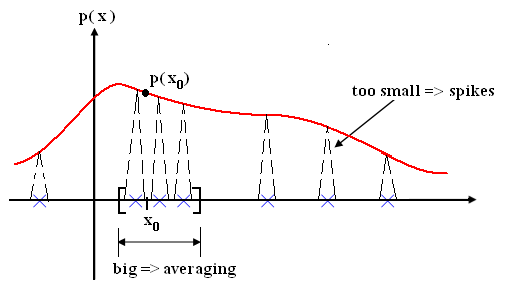

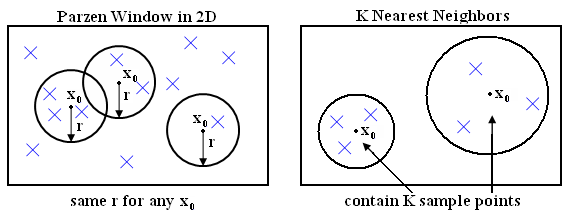

Figure 1

Figure 2

Parzen Window Method

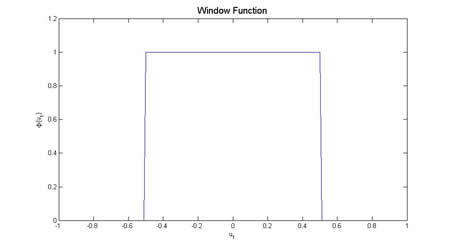

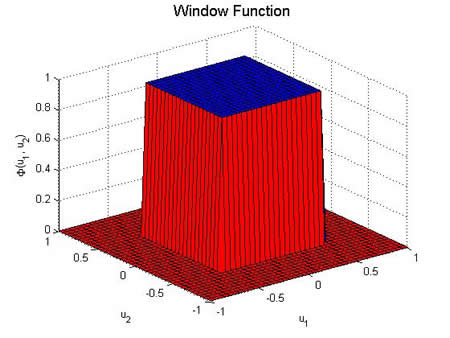

- Step 1:** Choose "shape" of your window by introducing a "window function"

e.g. if $ R_i $ is hybercube in $ \mathbb{R}^n $ with side-length $ h_i $, then the window function is $ \varphi $.

$ \varphi(\vec{u})=\varphi(u_1, u_2, \ldots, u_n)=1 $ if $ |u_i|<\frac{1}{2}, \forall i $ otherwise 0.

Examples of Parzen windows

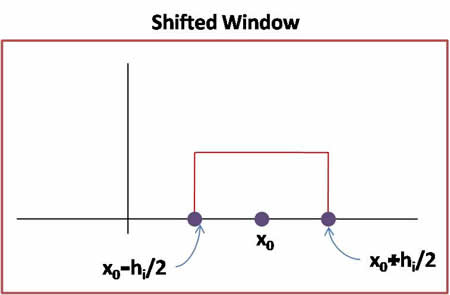

Given the shape for parzen window by $ \varphi $, we can scale and shift it as required by the method.

$ \varphi(\frac{\vec{x}-\vec{x_0}}{h_i}) $ is window centered at $ \vec{x_0} $ scaled by a factor $ h_i $, i.e. its side-length is $ h_i $.

- Step 2:** Write the density estimate of $ p(\vec{x}) $ at $ \vec{x_0} \in R_i $ using window function, denoted by $ p_i(\vec{x_0}) $.

We have number of samples for $ \{\vec{x_1}, \vec{x_2}, \ldots, \vec{x_i}\} $ inside $ R_i $ denoted by $ K_i $

$ \sum_{l=1}^{i}\varphi(\frac{\vec{x_l}-\vec{x_0}}{h_i}) $

So, $ p_i(\vec{x_0})=\frac{k_i}{iV_i}=\frac{1}{iV_i}\sum_{l=1}^{i}\varphi(\frac{\vec{x_l}-\vec{x_0}}{h_i}) $

Let $ \delta_i(\vec{u})=\frac{1}{V_i}\varphi(\frac{\vec{u}}{h_i}) $

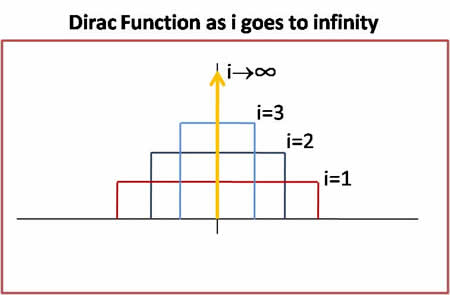

$ p_i(\vec{x_0})=\frac{1}{i}\sum_{l=1}^{i}\delta_i(\vec{x_l}-\vec{x_0}) $

This last equation is an average over impulses. For any l, $ \lim_{h_i->0}\delta(\vec{x_l}-\vec{x_0}) $ is [Dirac delta Function]. We do not want to average over dirac delta functions. Our objective is that $ p_i(\vec{x_0}) $ should converge to true value $ p(\vec{x}) $, as $ i\rightarrow \infty $

- What does convergence mean here?**

Observe $ \{p_i(\vec{x_0})\} $ is a sequence of random variables since $ p_i(\vec{x_0}) $ depends on random variables |sample_space_i|. What do we mean by convergence of a sequence of random variables (There are many definitions). We pick "Convergence in mean square" sense, i.e.

If $ \lim_{i\rightarrow \infty}E\{p_i(\vec{x_0})\}=p(\vec{x_0}) $

and $ \lim_{i\rightarrow \infty}Var\{p_i(\vec{x_0})\}=0 $

then we say $ p_i(\vec{x_0}) \longrightarrow p(\vec{x_0}) $ in mean square as $ i\rightarrow \infty $

- First condition:**

From the previous result, |jinha_pix0|

$ \displaystyle p_i (x_0) = \frac{1}{i} \sum_{l=1}^{i} \delta_i (\vec{x}_l - \vec{x}_0) $

We don't need an infinity number of samples to make $ E(p_i(\vec{x_o})) $ converge to $ p(\vec{x_o}) $ as $ i\to\infty $.

We just need $ h_i \to\infty $ (iie. $ V_i\to\infty $)

- To make it sure** |jinha_varpix0|, what should we do?

$ Var(p_i(x_0)) \rightarrow 0 $

$ \displaystyle Var(p_i(x_0)) = Var(\sum_{l=1}^{i} \frac{1}{i} \delta_i(\vec{x}_l - \vec{x}_0)) = \sum_{l=1}^{i} Var(\frac{1}{i} \delta_i(\vec{x}_l - \vec{x}_0)) $

$ \displaystyle = \sum_{l=1}^{i} E \left[ \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} - E\left[ \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} \right] \right)^2 \right] = \sum_{l=1}^{i} E \left[ \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} \right)^2 \right] - \left( E\left[ \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} \right] \right)^2 $

We know that second term is non-negative, therefore we can write

$ \displaystyle Var(p_i(x_0)) \le \sum_{l=1}^{i} E \left[ \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} \right)^2 \right] $

|jinha_varpix0_4|

$ \displaystyle \rightarrow Var(p_i(x_0)) \le \sum_{l=1}^{i} \int \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i} \right)^2 p(x_l) dx_l $

$ \displaystyle \rightarrow Var(p_i(x_0)) \le \sum_{l=1}^{i} \frac{1}{i^2} \int \frac{\psi \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i}\right)}{V_i} \frac{\psi \left( \frac{\delta_i(\vec{x}_l - \vec{x}_0)}{i}\right)}{V_i} p(x_l) dx_l $

$ \displaystyle \rightarrow Var(p_i(x_0)) \le \frac{1}{i V_i} sup\psi \int \sum_{l=1}^{i} \delta_i (x_l - x_0) p(x_l) dx_l $

$ \displaystyle \therefore Var(p_i(x_0)) \le \frac{1}{i V_i} sup\psi E [p_i(x_0)] $

If fixed i=d, then as $ v_i $ increased, $ var(P_i (\vec{x_0})) $ decreased.

But, if $ i V_i \rightarrow \infty $ , as $ i \rightarrow \infty $

(for example, if $ v_i= \frac{1}{\sqrt i}, v_i=\frac{13}{\sqrt i} or \frac{17}{\sqrt i} $)

then, $ var(P_i (\vec{x_0})) \rightarrow 0, as i \rightarrow \infty $

Here are some useful links to "Parzen-window Density Estimation"

http://www.cs.utah.edu/~suyash/Dissertation_html/node11.html

http://en.wikipedia.org/wiki/Parzen_window

http://www.personal.rdg.ac.uk/~sis01xh/teaching/CY2D2/Pattern2.pdf

http://www.eee.metu.edu.tr/~alatan/Courses/Demo/AppletParzen.html

Previous: Lecture 14 Next: Lecture 16