K-Nearest Neighbors Density Estimation

A slecture by CIT student Raj Praveen Selvaraj

Partly based on the ECE662 Spring 2014 lecture material of Prof. Mireille Boutin.

Contents

Introduction

This slecture discusses about the K-Nearest Neighbors(k-NN) approach to estimate the density of a given distribution. The approach of K-Nearest Neighbors is very popular in signal and image processing for clustering and classification of patterns. It is an non-parametric density estimation technique which lets the region volume be a function of the training data. We will discuss the basic principle behind the k-NN approach to estimate density at a point x0 and then move on to building a classifier using the k-NN Density estimate.

Basic Principle

The general formulation for density estimation states that, for N Observations x1,x2,x3,...,xn the density at a point x0 can be approximated by,

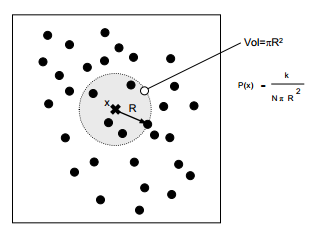

where V is the volume of some neighborhood around x0 and k denotes the number of observations that are contained within the neighborhood. The basic idea of k-NN is to extend the neighborhood, until the k nearest values are included where k ≥ 2. If we consider the neighborhood around x0 as a sphere, the volume of the sphere is given by,

where $ \Gamma (n) = (n - 1)! $ There are K samples within the sphere(including the boundaries) of radius h.This is illustrated in the below figure.

If xl is the kth closest sample point to x0 then,

We approximate the density &rho(x0) by,

where #s is the number of samples on the boundary of circle with radius hk

Most of the time this estimate is,

The Estimated density at x0 is the actual density x0

We will now prove the above claim that the estimated density is also the actual density at x0

The above claim is true only in cases where the window function $ \phi $ defines a region R with well defined boundaries.That is,

The random variable here is Vk(x0)

Let,u be a small band along the boundary of R given by,

Observation : $ u \ \epsilon \ [0,1] $

Let G be the event that "K-1 samples fall inside " $ R $

and let H be the event that "1 sample falls inside the small band along the boundary"

Then,

$ Prob(G, H) = Prob(G).Prob(H\mid G) $

$ Prob(G) = \binom{N}{K-1}u^{k-1}(1-u)^{N-K+1} $

$ Prob(H\mid G) = \binom{N-K+1}{1} \left ( \frac{\Delta u}{1-u} \right )\left ( 1 - \frac{\Delta u}{1-u} \right )^{N-K} $

where $ {\Delta u} $ is given by,

$ {\Delta u} = \int \rho(x)dx $

$ Prob(G,H) = \binom{N}{K-1} \binom{N-K+1}{1} \left ( \frac{\Delta u}{1-u} \right ) u^{K-1}\left ( 1 - \frac{\Delta u}{1-u} \right )^{N-K}(1-u)^{N-K+1} $

$ Prob(G,H) = \frac{N!}{1!(N-K+1)!}.\frac{N-K+1}{1!(N-K)!} $

$ Prob(G,H) = \frac{N!}{(k-1)!(N-K)!}.\Delta u(1 - u)^{N-K}u^{K-1}\left ( 1- \frac{\Delta u}{1-u}\right )^{N-K} $

$ \left ( 1- \frac{\Delta u}{1-u}\right )^{N-K} = 1, when \ \Delta u \ is \ very \ small $

$ Prob(G,H)\cong \Delta u. \frac{N!}{(k-1)!(N-K)!}.u^{k-1}(1-u)^{N-K} $</br>

The Expected value of the density at x0 by k-NN is given by,

Now, $ \int_{0}^{1}u^{K-Z}(1-u)^{N-K}du = \frac{\Gamma (k-1)\Gamma (N-K+1)}{\Gamma (N)} $ and recall $ \Gamma (n) = (n-1)! $. Substituting these in the above equation we get,

How to classify data using k-NN Density Estimate

Having seen how the density at any given point x0 is estimated based on the value of k and the given observations x1,x2,x3,...,xn, let's discuss about using the k-NN density estimate for classification. </br>

Method 1:

Let x0 from Rn be a point to classify.

Given are samples xi1,xx2,..,xxn for i classes.

We now pick a ki for each class and a window function, and we try to approximate the density at x0 for each class and then pick the class with the largest density based on,

If the priors of the classes are unknown, we use ROC curves to estimate the priors.

Method 2: </br>

Given are samples xi1,xx2,..,xxn from a Gaussian Mixture. We choose a single value of k and and one window function,

We then approximate the density at x0 by,

where Vi is the volume of the smallest window that contains k samples and ki is the number of samples among these k that belongs to class i.

We pick a class i0 such that,

So classification using K-NN can also be thought as assigning a class based on the majority vote of the k-Nearest Neighbors at any point x0

Conclusion

K-NN is a simple and intuitive algorithm that can be applied to any kind of distribution. It gives a very good classification rate when the number of samples is large enough. But choosing the best "k" for the classifier may be difficult. The time and space complexity of the algorithm is very high, and we need to make several optimizations for efficiently running the algorithm.

Neverthless, it's one among the most popular techniques used for classification.

- You may include links to other Project Rhea pages.

Questions and comments

If you have any questions, comments, etc. please post them on this page.