m |

|||

| (10 intermediate revisions by 4 users not shown) | |||

| Line 1: | Line 1: | ||

| + | [[Category:slecture]] | ||

| + | [[Category:ECE662Spring2014Boutin]] | ||

| + | [[Category:ECE]] | ||

| + | [[Category:ECE662]] | ||

| + | [[Category:pattern recognition]] | ||

| + | |||

= <center>Introduction to local (nonparametric) density estimation methods</center> = | = <center>Introduction to local (nonparametric) density estimation methods</center> = | ||

| − | <center>A | + | <center>A [http://www.projectrhea.org/learning/slectures.php slecture] by Yu Liu |

| + | |||

| + | Partly based on the [[2014_Spring_ECE_662_Boutin_Statistical_Pattern_recognition_slectures|ECE662 Spring 2014 lecture]] material of [[user:mboutin|Prof. Mireille Boutin]]. | ||

| + | |||

| + | </center> | ||

| + | |||

| + | |||

| + | [[Media:Slecture_introduction_to_local_density_estimation_methods.pdf| click here for PDF version]] | ||

---- | ---- | ||

| Line 42: | Line 55: | ||

<center> [[Image:10f.gif|border]] <span style="line-height: 1.5em;">(2)</span></center> | <center> [[Image:10f.gif|border]] <span style="line-height: 1.5em;">(2)</span></center> | ||

Consider a one-dimension case, assume that [[Image:Cf.gif|border]], thus [[Image:Z.gif|border]], where ''h'' is an adjustable constant. Substitute into formula (2) we have | Consider a one-dimension case, assume that [[Image:Cf.gif|border]], thus [[Image:Z.gif|border]], where ''h'' is an adjustable constant. Substitute into formula (2) we have | ||

| − | <center>[[Image:11f.gif|border]]</center> | + | <center>[[Image:11f.gif|border|700x78px]]</center> |

<br>We can see that if ''n'' equals one, ''p''<sub>''n''</sub>(''x'') is just the window function. If ''n'' approaches infinity, ''p''<sub>''n''</sub>(''x'') can converge to any complex form. If ''n'' is relatively small, ''p''<sub>''n''</sub>(''x'') is very sensitive to the value of ''h''. In general small ''h'' leads to the noise error while large ''h'' leads to the over-smoothing error, which can be illustrated by the following example. | <br>We can see that if ''n'' equals one, ''p''<sub>''n''</sub>(''x'') is just the window function. If ''n'' approaches infinity, ''p''<sub>''n''</sub>(''x'') can converge to any complex form. If ''n'' is relatively small, ''p''<sub>''n''</sub>(''x'') is very sensitive to the value of ''h''. In general small ''h'' leads to the noise error while large ''h'' leads to the over-smoothing error, which can be illustrated by the following example. | ||

| Line 71: | Line 84: | ||

<br>The posterior ''p''(''w''<sub>''i''</sub>|''x'') is given by | <br>The posterior ''p''(''w''<sub>''i''</sub>|''x'') is given by | ||

<center> [[Image:17f.gif|border]] <span style="line-height: 1.5em;">(4)</span></center> | <center> [[Image:17f.gif|border]] <span style="line-height: 1.5em;">(4)</span></center> | ||

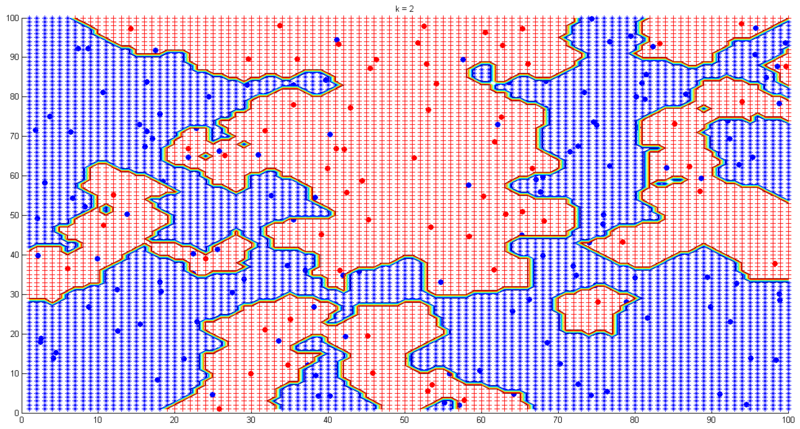

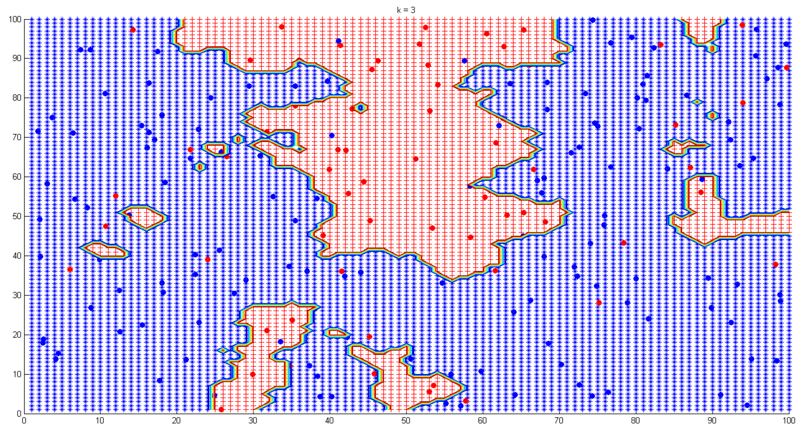

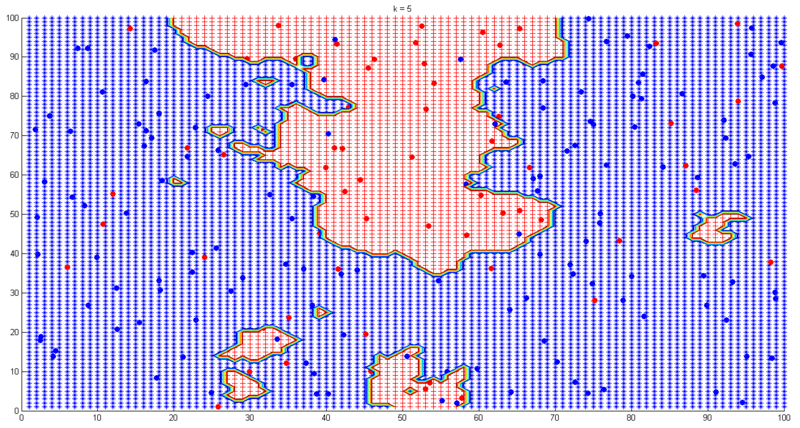

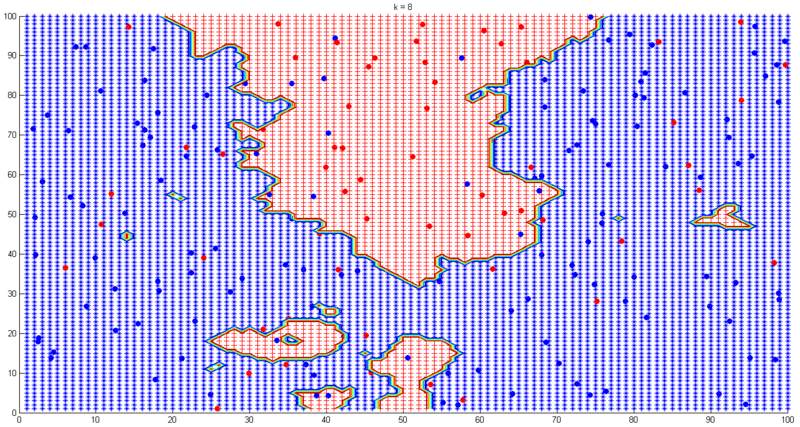

| − | where ''m'' is the number of classes. Formula (4) tells us one simple decision rule: the class of a test point ''x'' is the same as the most frequent one among the nearest ''k'' points of ''x''. Simple and intuitive, isn’t it? Having said that, choosing ''k'' in ''k''-NN is still a nontrivial problem as choosing ''h'' in Parzen density estimation. Small ''k'' leads to noisy decision boundaries while large ''k'' leads to over-smoothed boundaries, which is illustrated by the following example. In this experiment samples are | + | where ''m'' is the number of classes. Formula (4) tells us one simple decision rule: the class of a test point ''x'' is the same as the most frequent one among the nearest ''k'' points of ''x''. Simple and intuitive, isn’t it? Having said that, choosing ''k'' in ''k''-NN is still a nontrivial problem as choosing ''h'' in Parzen density estimation. Small ''k'' leads to noisy decision boundaries while large ''k'' leads to over-smoothed boundaries, which is illustrated by the following example. In this experiment samples are 200 pre-labeled (red or blue) points. The task is to find the classification boundaries under different ''k'' values. Fig. 6-9 show the results. |

| − | + | <center>[[Image:6ly.png|border|center|800x600px]]</center> <center>Figure 6. ''k''-NN decision boundaries experiment (''k''=2)</center> <center></center> <center>[[Image:7ly.png|border|center|800x600px]]</center> <center>Figure 7. ''k''-NN decision boundaries experiment (''k''=3)</center> <center></center> <center>[[Image:8ly.png|border|center|800x600px]]</center> <center>Figure 8. ''k''-NN decision boundaries experiment (''k''=5)</center> <center></center> <center>[[Image:9ly.png|border|center|800x600px]]</center> <center>Figure 9. ''k''-NN decision boundaries experiment (''k''=8)</center> | |

| − | <center>[[Image:6ly.png|border|center|800x600px]]</center> <center>Figure 6. ''k''-NN decision boundaries experiment (''k''=2)</center> | + | |

| − | + | ||

| − | <center></center> <center>[[Image:7ly.png|border|center|800x600px]]</center> <center>Figure 7. ''k''-NN decision boundaries experiment (''k''=3)</center> | + | |

| − | + | ||

| − | <center></center> <center>[[Image:8ly.png|border|center|800x600px]]</center> <center>Figure 8. ''k''-NN decision boundaries experiment (''k''=5)</center> | + | |

| − | + | ||

| − | <center></center> <center>[[Image:9ly.png|border|center|800x600px]]</center> <center>Figure 9. ''k''-NN decision boundaries experiment (''k''=8)</center> | + | |

| − | + | ||

<br> In practice we can use cross-validation to choose the “best” ''k''. Below lists some advantages and disadvantages of ''k''-NN: | <br> In practice we can use cross-validation to choose the “best” ''k''. Below lists some advantages and disadvantages of ''k''-NN: | ||

| Line 90: | Line 95: | ||

[1] Mireille Boutin, “ECE662: Statistical Pattern Recognition and Decision Making Processes,” Purdue University, Spring 2014<br>[2] http://www.cse.buffalo.edu/~jcorso/t/CSE555/files/annote_28feb_nonprm.pdf<br>[3] http://www.csd.uwo.ca/~olga/Courses/CS434a_541a/Lecture6.pdf<br><br><br> | [1] Mireille Boutin, “ECE662: Statistical Pattern Recognition and Decision Making Processes,” Purdue University, Spring 2014<br>[2] http://www.cse.buffalo.edu/~jcorso/t/CSE555/files/annote_28feb_nonprm.pdf<br>[3] http://www.csd.uwo.ca/~olga/Courses/CS434a_541a/Lecture6.pdf<br><br><br> | ||

| + | |||

| + | ---- | ||

| + | ==[[slecture_Introduction_to_local_density_estimation_methods_review|Questions and comments]]== | ||

| + | If you have any questions, comments, etc. please post them on [[slecture_Introduction_to_local_density_estimation_methods_review|this page]]. | ||

Latest revision as of 09:52, 22 January 2015

Contents

Introduction to local (nonparametric) density estimation methods

Partly based on the ECE662 Spring 2014 lecture material of Prof. Mireille Boutin.

1. Introduction

This slecture introduces two local density estimation methods which are Parzen density estimation and k-nearest neighbor density estimation. Local density estimation is also referred to as non-parametric density estimation. To make things clear, let’s first look at parametric density estimation. In parametric density estimation, we can assume that there exists a density function which can be determined by a set of parameters. The set of parameters are estimated from the sample data and are later used in designing the classifier. However, in some practical situations the assumption that there exists a parametric form of the density function does not hold true. For example, it is very hard to fit a multimodal probability distribution with a simple function. In this case, we need to estimate the density function in the nonparametric way, which means that the density function is estimated locally based on a small set of neighboring samples. Because of this locality, local (nonparametric) density estimation is less accurate than parametric density estimation. In the following text the word “local” is preferred over “nonparametric.”

It is noteworthy that it is very difficult to obtain an accurate local density estimation, especially when the dimension of the feature space is high. So why do we bother using local density estimation? This is because our goal is not to get an accurate estimation, but rather to use the estimation to design a well performed classifier. The inaccuracy of local density estimation does not necessarily lead to a poor decision rule.

2. General Principle

In local density estimation the density function pn(x) can be approximated by

(1)

(1)where vn is the volume of a small region R around point x, n is the total number of samples xi (i =1, 2…, n) drawn according to pn(x), and kn is the number of xi’s which fall into region R. The reason why pn(x) can be calculated in this way is that pn(x) does not vary much within a relatively small region, thus the probability mass of region R can be approximated by pn(x)vn, which equals kn/n.

Some examples of region R in different dimensions: i) line segment in one-dimension, ii) circle or rectangle in two-dimension, iii) sphere or cube in three-dimension, iv) hyper sphere or hypercube in d-dimension (d > 3).

Three conditions we need to pay attention to when using formula (1) are:

i)![]() . This is because if vn is fixed, then pn(x) only represents the average probability density as n grows larger, but what we need is the point probability density, so we should have

. This is because if vn is fixed, then pn(x) only represents the average probability density as n grows larger, but what we need is the point probability density, so we should have ![]() when

when ![]() .

.

ii)![]() . This is to make sure that we do not get zero probability density.

. This is to make sure that we do not get zero probability density.

iii)![]() . This is to make sure that pn(x) does not diverge.

. This is to make sure that pn(x) does not diverge.

3. Parzen Density Estimation

In Parzen density estimation vn is directly determined by n while kn is a random variable which denotes the number of samples that fall into vn. Assume that the region R is a d-dimensional hypercube with its edge length hn, thus

The equivalent conditions which meet the aforementioned three conditions are:

Therefore vn can be chosen as ![]() or

or ![]() , where h is an adjustable constant. Now that the relationship between vn and n is defined, the next step is to determine kn. To determine kn, we define a window function as follows:

, where h is an adjustable constant. Now that the relationship between vn and n is defined, the next step is to determine kn. To determine kn, we define a window function as follows:

where xi’s (i = 1, 2, …, n) are the given samples and x is the point where the density is to be estimated. Thus we have

The function ![]() is called a Parzen window function, which enables us to count the number of sample points in the hypercube with its edge length hn. According to [2], using hypercube as the window function may lead to discontinuity in the estimation. This is due to the superimposition of sharp pulses centered at the given sample points when h is small. To overcome this shortcoming, we can consider a more general form of window function rather than the hypercube. Note that if the following two conditions are met, the estimated pn(x) is guaranteed to be proper.

is called a Parzen window function, which enables us to count the number of sample points in the hypercube with its edge length hn. According to [2], using hypercube as the window function may lead to discontinuity in the estimation. This is due to the superimposition of sharp pulses centered at the given sample points when h is small. To overcome this shortcoming, we can consider a more general form of window function rather than the hypercube. Note that if the following two conditions are met, the estimated pn(x) is guaranteed to be proper.

Therefore a better choice of window function which removes discontinuity can be Gaussian window:

The estimated density is given by

(2)

(2)Consider a one-dimension case, assume that ![]() , thus

, thus ![]() , where h is an adjustable constant. Substitute into formula (2) we have

, where h is an adjustable constant. Substitute into formula (2) we have

We can see that if n equals one, pn(x) is just the window function. If n approaches infinity, pn(x) can converge to any complex form. If n is relatively small, pn(x) is very sensitive to the value of h. In general small h leads to the noise error while large h leads to the over-smoothing error, which can be illustrated by the following example.

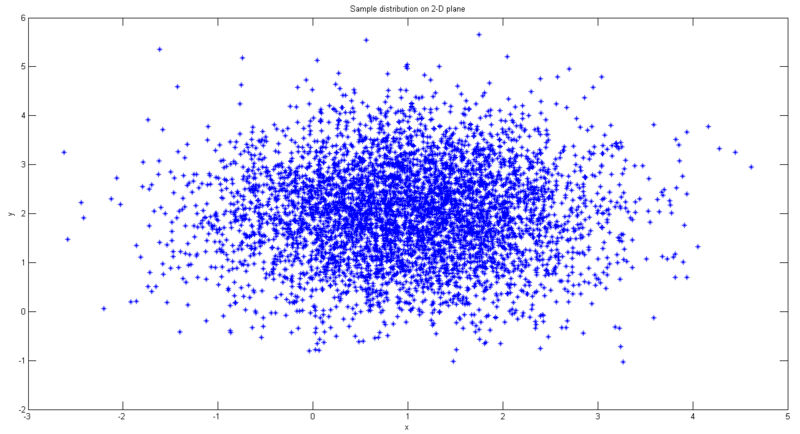

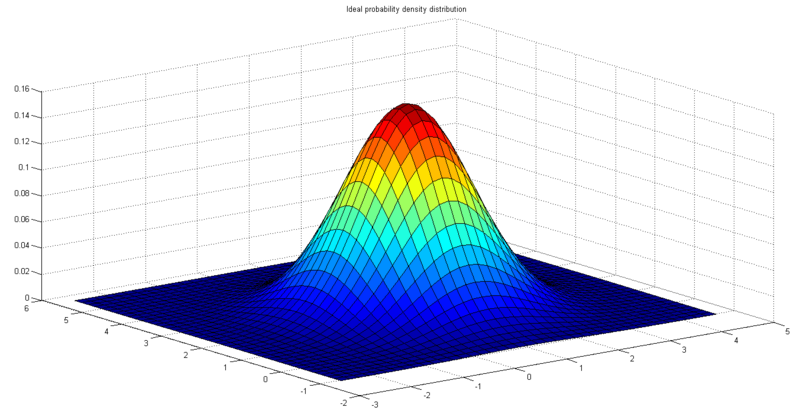

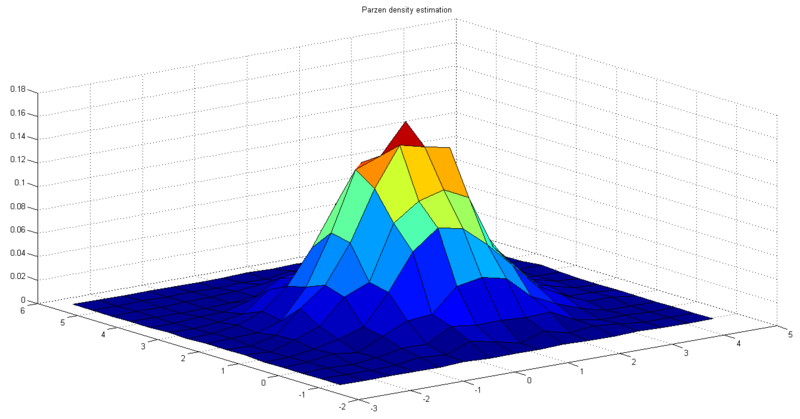

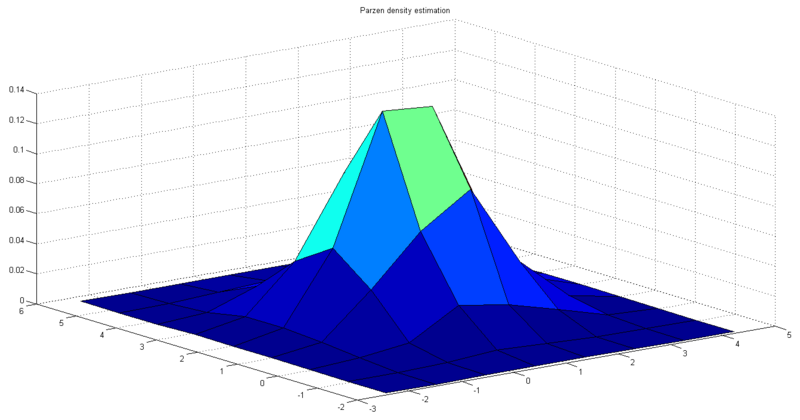

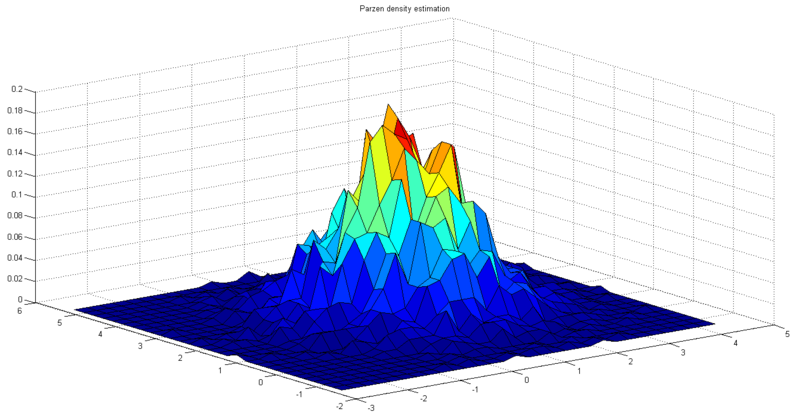

In this experiment samples are 5000 points on 2-D plane with Gaussian distribution. The mean vector is [1 2], and the covariance matrix is [1 0; 0 1]. Choose rectangle Parzen window with ![]() , thus

, thus ![]() . Fig. 1 shows the sample distribution. Fig. 2 shows the ideal probability density distribution. Fig. 3 shows the result of Parzen density estimation.

. Fig. 1 shows the sample distribution. Fig. 2 shows the ideal probability density distribution. Fig. 3 shows the result of Parzen density estimation.

Next we change the value of hn and see how it affects the estimation. Fig. 4 shows the result of Parzen density estimation when hn is twice its initial value. Fig. 5 shows the result of Parzen density estimation when hn is its initial value divided by two. We can see that the results agree with the aforesaid property of hn.

To design a classifier using Parzen window method [3], we estimate the densities for each class and classify the test point by the label corresponding to the maximum posterior. Below lists some advantages and disadvantages of Parzen density estimation:

Advantages: i) pn(x) can converge to any complex form when n approaches infinity; ii) applicable to data with any distribution.

Disadvantages: i) need a large number of samples to obtain an accurate estimation; ii) computationally expensive, not suitable for feature space with very high dimensions; iii) the adjustable constant h has a relatively heavy influence on the decision boundaries when n is small, and is not easy to choose in practice.

4. K-Nearest Neighbor Density Estimation

In k-nearest neighbor density estimation (use acronym “k-NN” in the following text) k is directly determined by n while v is a random variable which denotes the volume that encompasses just k sample points inside v and on its boundary. If v is a sphere, it can be given by

where h is the radius of the sphere with center x. hk equals ||xlk - x|| where xlk is the kth closest sample point to x. Then the probability density at x is approximated by

(3)

(3)where k1 is number of sample points on the boundary of vk(x). Most of the time formula (3) can be rewritten as

In Parzen density estimation vn only depends on n and is the same for all the test points, while in k-NN vn is smaller at high density area and is larger at low density area. This strategy seems more reasonable than the strategy to determine vn in Parzen density estimation since now vn is adaptive to the local density. In practice, when we want to classify data using k-NN estimation, it turns out that we can get the posterior p(wi|x) directly without worrying about p(x). If we have k samples fall into volume v around point x, and among the k samples there are ki samples belonging to class wi, then we have

The posterior p(wi|x) is given by

(4)

(4)where m is the number of classes. Formula (4) tells us one simple decision rule: the class of a test point x is the same as the most frequent one among the nearest k points of x. Simple and intuitive, isn’t it? Having said that, choosing k in k-NN is still a nontrivial problem as choosing h in Parzen density estimation. Small k leads to noisy decision boundaries while large k leads to over-smoothed boundaries, which is illustrated by the following example. In this experiment samples are 200 pre-labeled (red or blue) points. The task is to find the classification boundaries under different k values. Fig. 6-9 show the results.

In practice we can use cross-validation to choose the “best” k. Below lists some advantages and disadvantages of k-NN:

Advantages: i) decision performance is good if n is large enough; ii) applicable to data with any distribution; iii) simple and intuitive.

Disadvantages: i) need a large number of samples to obtain an accurate estimation, which is inevitable in local density estimation; ii) computationally expensive, low efficiency for feature space with very high dimensions; iii) choosing the “best” k is nontrivial.

5. Reference

[1] Mireille Boutin, “ECE662: Statistical Pattern Recognition and Decision Making Processes,” Purdue University, Spring 2014

[2] http://www.cse.buffalo.edu/~jcorso/t/CSE555/files/annote_28feb_nonprm.pdf

[3] http://www.csd.uwo.ca/~olga/Courses/CS434a_541a/Lecture6.pdf

Questions and comments

If you have any questions, comments, etc. please post them on this page.